That means that 12mp sensors, your "very good balance" has over twice as much "blur" as it needs at f8. Sensors have enough "blur" added so there won't be problems with aliasing at f2.8. I do math like Cepstral (homomorphic) blind deconvolution of lens and sensor point spread functions, but that level of math is not needed for this discussion. I tried to keep the hard math out of my discussion. Read the thread that Chris linked, especially the posts near the end dealing with the convolution (mathematical combining) of lens diffraction and sensor AA filtering.Īnd read what I wrote elsewhere in this thread.

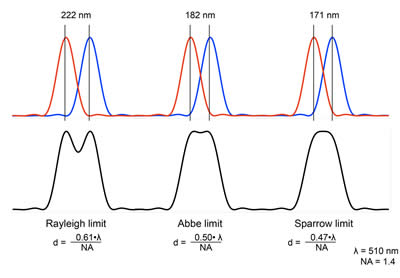

If that is what you said (and it doesn't sound like what you said, but that's another issue) then you are simply incorrect. I didn't say that the Mp count doesn't matter, I said that 12 Mp appeared to be a very good balance between quality of image from the sensor and losses due to lens diffraction at smaller apertures. There are valid reasons that higher MP count offers improved IQ, given you are not lens limited. IF MP count does not matter, how do you explain that low-ISO D3x prints look "sharper" than D3s prints at large sizes? And it gives us more processing power per unit area (same cost) of processor chip to chew that data, and more storage, per unit area, of RAM and flash chips, to store the data. Moore's law gives us more sensing elements, per unit area of sensor each year. The neat thing is that the higher resolution comes, essentially, free. #Diffraction limit calculator full(And yes, I know that 1.3 microns means a 0.5 gigapixel full frame camera). We'd go back to the diffraction limits of lenses actually looking like diffraction limits. But it would give you almost twice the resolution at f8, more than twice the resolution at f11. It wouldn't give you 4.4x higher resolution than a 12mp camera. Now, if you had a sensor pitch of 1.3 microns, you'd get a camera where the 3.7 micron spread of an f2.8 lens would be all the anti-aliasing that the camera needed. As the MP count gets larger, they can use that to reduce the amount of AA filtering used. Because a 12 megapixel camera only can extract 100% of the capability of lenses when their diffraction is very small, under the f4 or f2.8 that the camera is designed for.

But, because the camera has an AA filter expanding its PSF, you end up being able to see the 10.7 microns of f8 quite easily.Īnd that's why the "megapixel war" makes sense. So, without an AA filter, you would not be able to see diffraction, at all, until the Airy disc diameter hit about 15.8 microns, which happens at f13. Check the Cambridge in Color article that Max cites, f4 is enough to contribute an additional 5.3 microns of point spread, and camera makers tend to use either f4 or f2.8 (3.7 microns) as the "lens contribution" to the anti-aliasing equation. But the camera maker counts on the lens contributing some spread, due to (the trumpets blare!) diffraction. Technically, you need 15 microns of spread to eliminate all aliasing, because the green "luminance" pixels are at sqrt(2) * the total pixel pitch. So, the camera designer will typically add an AA filter with a point spread (literally "blur", it "spreads" points of light into small squares) of about 5-10 microns.

To prevent this, properly designed cameras include an anti-aliasing (AA) filter, something that reduces the high spatial frequencies (detail) in images.Ī 12mp camera with an APS sensor has a pixel pitch around 5.6microns. "However, my example above shows that the 15 megapixel Canon 50D or Canon T1i is already "diffraction limited" when the lens is stopped down to "only" F8."ĭigital cameras are subject to aliasing, to the creation of moire (Patterns at angles or of different frequencies than patterns that actually occur in the image) jaggies and stairstep patterns. Diffraction limit calculator and an article

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed